ultralytics 8.0.196 instance-mean Segment loss (#5285)

Co-authored-by: Andy <39454881+yermandy@users.noreply.github.com>

This commit is contained in:

parent

7517667a33

commit

e7f0658744

72 changed files with 369 additions and 493 deletions

|

|

@ -55,7 +55,7 @@ To perform object detection on an image, use the `predict` method as shown below

|

|||

|

||||

# Run inference on an image

|

||||

everything_results = model(source, device='cpu', retina_masks=True, imgsz=1024, conf=0.4, iou=0.9)

|

||||

|

||||

|

||||

# Prepare a Prompt Process object

|

||||

prompt_process = FastSAMPrompt(source, everything_results, device='cpu')

|

||||

|

||||

|

|

@ -74,7 +74,7 @@ To perform object detection on an image, use the `predict` method as shown below

|

|||

ann = prompt_process.point_prompt(points=[[200, 200]], pointlabel=[1])

|

||||

prompt_process.plot(annotations=ann, output='./')

|

||||

```

|

||||

|

||||

|

||||

=== "CLI"

|

||||

```bash

|

||||

# Load a FastSAM model and segment everything with it

|

||||

|

|

|

|||

|

|

@ -66,10 +66,10 @@ You can download the model [here](https://github.com/ChaoningZhang/MobileSAM/blo

|

|||

=== "Python"

|

||||

```python

|

||||

from ultralytics import SAM

|

||||

|

||||

|

||||

# Load the model

|

||||

model = SAM('mobile_sam.pt')

|

||||

|

||||

|

||||

# Predict a segment based on a point prompt

|

||||

model.predict('ultralytics/assets/zidane.jpg', points=[900, 370], labels=[1])

|

||||

```

|

||||

|

|

@ -81,10 +81,10 @@ You can download the model [here](https://github.com/ChaoningZhang/MobileSAM/blo

|

|||

=== "Python"

|

||||

```python

|

||||

from ultralytics import SAM

|

||||

|

||||

|

||||

# Load the model

|

||||

model = SAM('mobile_sam.pt')

|

||||

|

||||

|

||||

# Predict a segment based on a box prompt

|

||||

model.predict('ultralytics/assets/zidane.jpg', bboxes=[439, 437, 524, 709])

|

||||

```

|

||||

|

|

|

|||

|

|

@ -54,7 +54,7 @@ You can use RT-DETR for object detection tasks using the `ultralytics` pip packa

|

|||

|

||||

=== "CLI"

|

||||

|

||||

```bash

|

||||

```bash

|

||||

# Load a COCO-pretrained RT-DETR-l model and train it on the COCO8 example dataset for 100 epochs

|

||||

yolo train model=rtdetr-l.pt data=coco8.yaml epochs=100 imgsz=640

|

||||

|

||||

|

|

|

|||

|

|

@ -152,28 +152,27 @@ This comparison shows the order-of-magnitude differences in the model sizes and

|

|||

|

||||

Tests run on a 2023 Apple M2 Macbook with 16GB of RAM. To reproduce this test:

|

||||

|

||||

|

||||

!!! example ""

|

||||

|

||||

=== "Python"

|

||||

```python

|

||||

from ultralytics import FastSAM, SAM, YOLO

|

||||

|

||||

|

||||

# Profile SAM-b

|

||||

model = SAM('sam_b.pt')

|

||||

model.info()

|

||||

model('ultralytics/assets')

|

||||

|

||||

|

||||

# Profile MobileSAM

|

||||

model = SAM('mobile_sam.pt')

|

||||

model.info()

|

||||

model('ultralytics/assets')

|

||||

|

||||

|

||||

# Profile FastSAM-s

|

||||

model = FastSAM('FastSAM-s.pt')

|

||||

model.info()

|

||||

model('ultralytics/assets')

|

||||

|

||||

|

||||

# Profile YOLOv8n-seg

|

||||

model = YOLO('yolov8n-seg.pt')

|

||||

model.info()

|

||||

|

|

@ -193,7 +192,7 @@ To auto-annotate your dataset with the Ultralytics framework, use the `auto_anno

|

|||

=== "Python"

|

||||

```python

|

||||

from ultralytics.data.annotator import auto_annotate

|

||||

|

||||

|

||||

auto_annotate(data="path/to/images", det_model="yolov8x.pt", sam_model='sam_b.pt')

|

||||

```

|

||||

|

||||

|

|

|

|||

|

|

@ -12,8 +12,7 @@ keywords: Meituan YOLOv6, object detection, Ultralytics, YOLOv6 docs, Bi-directi

|

|||

|

||||

|

||||

|

||||

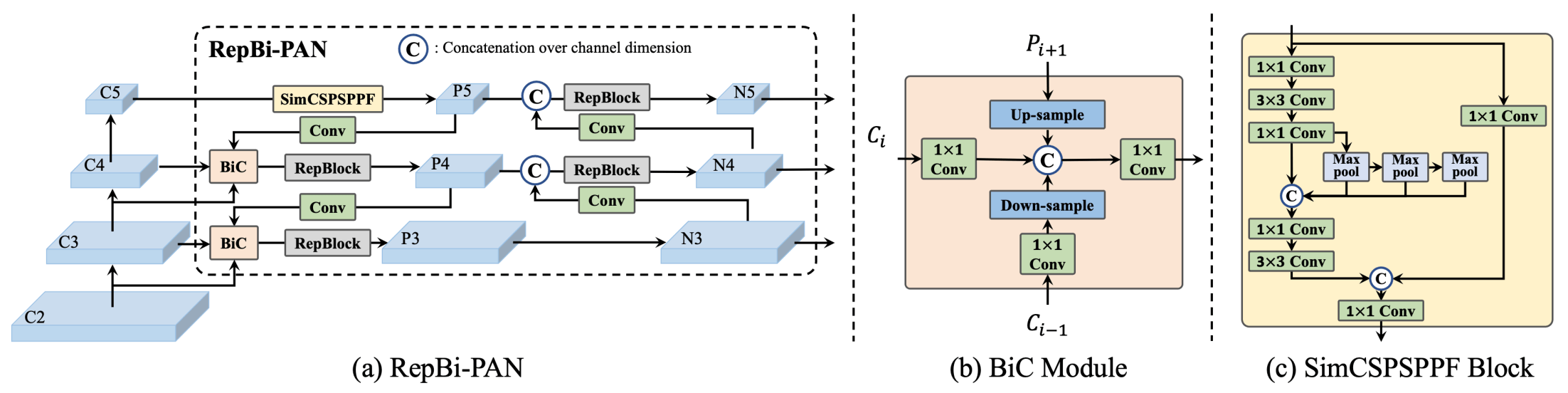

**Overview of YOLOv6.** Model architecture diagram showing the redesigned network components and training strategies that have led to significant performance improvements. (a) The neck of YOLOv6 (N and S are shown). Note for M/L, RepBlocks is replaced with CSPStackRep. (b) The

|

||||

structure of a BiC module. (c) A SimCSPSPPF block. ([source](https://arxiv.org/pdf/2301.05586.pdf)).

|

||||

**Overview of YOLOv6.** Model architecture diagram showing the redesigned network components and training strategies that have led to significant performance improvements. (a) The neck of YOLOv6 (N and S are shown). Note for M/L, RepBlocks is replaced with CSPStackRep. (b) The structure of a BiC module. (c) A SimCSPSPPF block. ([source](https://arxiv.org/pdf/2301.05586.pdf)).

|

||||

|

||||

### Key Features

|

||||

|

||||

|

|

|

|||

|

|

@ -51,7 +51,7 @@ YOLOv8 is the latest iteration in the YOLO series of real-time object detectors,

|

|||

=== "Detection (Open Images V7)"

|

||||

|

||||

See [Detection Docs](https://docs.ultralytics.com/tasks/detect/) for usage examples with these models trained on [Open Image V7](https://docs.ultralytics.com/datasets/detect/open-images-v7/), which include 600 pre-trained classes.

|

||||

|

||||

|

||||

| Model | size<br><sup>(pixels) | mAP<sup>val<br>50-95 | Speed<br><sup>CPU ONNX<br>(ms) | Speed<br><sup>A100 TensorRT<br>(ms) | params<br><sup>(M) | FLOPs<br><sup>(B) |

|

||||

| ----------------------------------------------------------------------------------------- | --------------------- | -------------------- | ------------------------------ | ----------------------------------- | ------------------ | ----------------- |

|

||||

| [YOLOv8n](https://github.com/ultralytics/assets/releases/download/v0.0.0/yolov8n-oiv7.pt) | 640 | 18.4 | 142.4 | 1.21 | 3.5 | 10.5 |

|

||||

|

|

|

|||

Loading…

Add table

Add a link

Reference in a new issue